In 2026, making media—whether it’s a short clip for TikTok, a polished YouTube video, a podcast episode, or even a short film—feels less like wrestling with heavy software and more like having a creative partner who never sleeps. I’ve spent the last couple of years experimenting with these tools in my own small setup here in Karachi, turning late-night ideas into content without a full crew or studio. What started as curiosity has become routine: prompt an AI for visuals, clean up audio in seconds, generate voiceovers that sound human, and edit footage faster than I ever could manually.

The shift happened gradually. Back in 2024-2025, AI was mostly gimmicky—fun for memes but unreliable for anything professional. Now, the models have matured. Physics in motion looks believable, lips sync almost perfectly, and the output holds up on big screens or small phones. Creators who embrace these tools aren’t replacing their skills; they’re multiplying them. A solo filmmaker can storyboard, shoot concepts, add effects, and finalize in days instead of months.

Here are seven must-know AI media production tools that stand out in 2026. These aren’t hype picks; they’re the ones real people—YouTubers, podcasters, marketers, indie directors—actually rely on daily. I’ll walk through what each does best, how it fits into workflows, real-world quirks, and why it matters now.

Runway stands tall for anyone serious about cinematic video generation and editing.

Runway’s Gen-4 (and the newer Gen-4.5 updates) changed how people think about text-to-video. You type a description—”a bustling Karachi street at dusk with neon signs reflecting on wet pavement, camera tracking slowly from low angle”—and it generates coherent clips with realistic motion, lighting, and depth. The real power comes in editing features: motion brush lets you paint areas to animate differently, like making rain fall harder in one part of the frame while keeping the rest still. Inpainting fills gaps seamlessly, and lip-sync tools match generated mouths to any audio track.

I used it last month for a short promo: fed in a script, generated base scenes from images I’d shot, then used the brush to add dynamic elements like moving traffic. Export quality hits 4K, no watermarks on paid plans. Pricing starts around $15/month for standard, with unlimited tiers higher up. Free trial gives limited credits—enough to test but not produce much.

Quirks: Prompt adherence improved massively, but complex multi-character scenes can still glitch on consistency. Best for filmmakers who want control, not pure one-click magic. Pair it with your own footage for hybrid workflows—many pros do this to cut costs on VFX.

Google Veo (now in Veo 3.1 or Flow integrations) delivers reliable, high-fidelity video from prompts or images.

Google’s Veo family excels at consistency and physics-aware motion. Drop a prompt like “a young woman walking through a crowded bazaar in Lahore, bargaining with a vendor, natural lighting, handheld camera feel,” and the output sticks close without wild deviations. It handles long clips better than most—up to minutes in some modes—and excels at cinematic styles: dolly zooms, slow-motion, subtle camera moves.

In 2026, Veo integrates into Google Flow for storyboard-to-final workflows. You build scenes sequentially, tweak camera angles, add transitions. For creators, this means concepting a full short film sequence without shooting a frame. Access comes via Google AI Pro (around $20/month), with higher tiers removing limits.

One creator I follow used Veo to prototype music videos: generated visuals from lyrics, refined in Flow, then overlaid real audio. Results looked production-ready. Downsides: occasional artifacts in fast action, and it’s still invite/limited in some regions, though wider now. Strong for reliable results over experimental chaos.

OpenAI’s Sora turns narratives into cohesive video stories.

Sora, especially Sora 2, focuses on storytelling. Feed it a detailed script or scene description—”a detective in a rainy cyberpunk city chases a suspect across rooftops, neon lights flickering, dramatic thunder”—and it builds narrative flow with emotional beats, consistent characters, and dialogue sync. It understands cause-effect better: if a character throws something, physics and reactions follow logically.

For indie filmmakers or marketers, it’s gold for concept testing or full shorts. ChatGPT Plus ($20/month) includes access, Pro tiers bump quality and length. Social media clips, ads, training videos—Sora nails realism without looking too “AI.”

A friend in content marketing generated product explainers: script in, Sora out, minor tweaks in CapCut. Lip movements and expressions improved hugely in 2026 updates. Limitations: watermarks on base plans, occasional uncanny valley in faces. Best when you guide it tightly with prompts.

ElevenLabs leads in voice generation and cloning for audio production.

Audio is half the battle in media. ElevenLabs clones voices from short samples—your own or licensed—and generates natural speech in 29+ languages, including Urdu with regional accents. Emotional range covers excited, calm, angry; prosody adjusts for emphasis.

Podcasters use it for intros/outros, YouTubers for voiceovers when camera-shy. Multilingual dubbing shines: record in English, clone, generate in other languages. Studio-quality output, low latency.

Pricing: free tier limited, paid starts low with credits. I cloned my voice for a series—sounded eerily like me, even with pauses and breaths. Ethical note: watermarking and consent tools improved, but use responsibly. Pairs perfectly with video tools for full dubbed content.

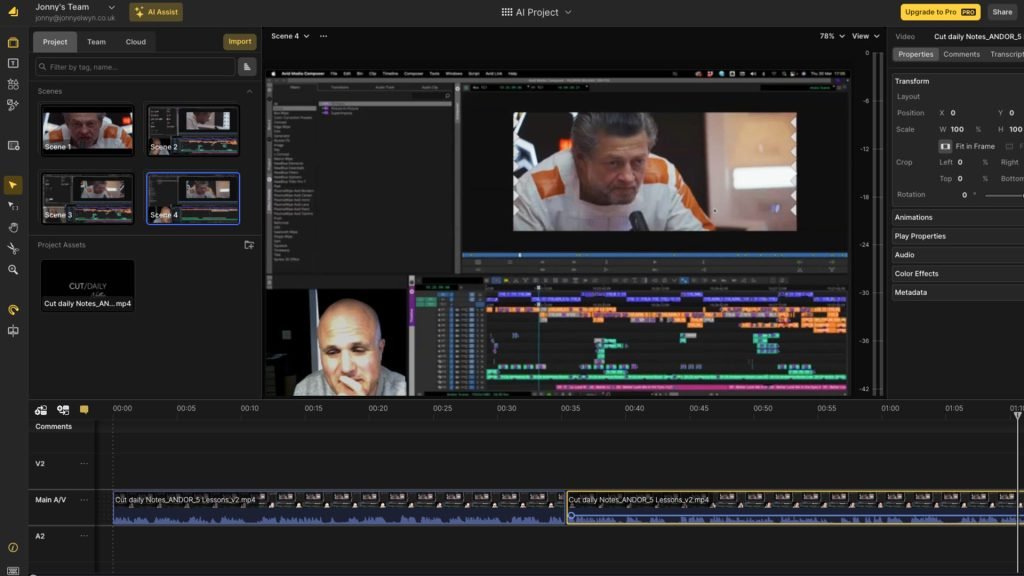

Descript transforms audio/video editing into text-based magic.

Descript treats video like a Google Doc. Transcribe, edit text, and cuts sync automatically. Remove filler words (“um,” “ah”) with one click, Overdub regenerates speech in your cloned voice for fixes. Studio Sound cleans noisy audio—great for Karachi’s background traffic or echoey rooms.

For podcasters or vloggers, it’s a time-saver. Record raw, edit transcript, add AI B-roll or eye contact correction. 2026 updates added better multi-track handling and AI clip suggestions.

Free tier generous, paid unlocks more voices and features. One YouTuber I know repurposes hour-long interviews into shorts in under 30 minutes. Drawback: learning curve for advanced features, but basics are intuitive.

Midjourney (with video extensions) and Flux variants dominate AI image generation for assets.

Images feed everything—thumbnails, storyboards, backgrounds. Midjourney v6+ and community Flux models produce stunning stills: photorealistic portraits, cinematic concepts, abstract art. Discord-based or web interfaces, prompt engineering yields pro results.

For filmmakers: generate key frames, then animate in Runway or Veo. Creators use for mood boards or direct video input. Free trials, paid subs reasonable.

Adobe Firefly integrates into Premiere/After Effects for seamless workflows.

Adobe’s Firefly powers generative fill, text-to-image/video inside familiar tools. Extend shots, remove objects, create assets without leaving your timeline. For pros transitioning to AI, it’s least disruptive.

Subscription via Creative Cloud. Strong brand controls, ethical training data. Best for hybrid human-AI production.

Higgsfield.ai bundles top models into one studio for all-in-one creation.

Higgsfield aggregates Sora, Kling, Veo, Runway models—switch seamlessly. Character consistency across scenes, one-click refinements. For creators juggling tools, it saves time.

Subscription model, often praised for filmmaker features like shot lists. Emerging favorite in 2026 workflows.

These seven—Runway, Veo, Sora, ElevenLabs, Descript, Midjourney/Flux, and Higgsfield—form a modern toolkit. Start with one or two that match your bottleneck: video gen for visuals, audio for polish, editing for speed.

A solo creator I know went from monthly uploads to weekly by chaining them: Midjourney concepts → Veo clips → Descript edit → ElevenLabs voice. Output quality rivals small teams.

The real secret? These tools amplify creativity, not replace it. Prompt well, iterate, combine with your touch. In Karachi’s vibrant scene—vloggers, musicians, marketers—these level the field. Experiment, stay ethical, and watch your production soar in 2026.